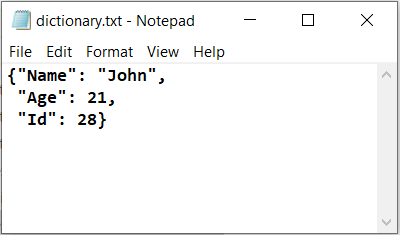

DescriptiveĪs we define it, our mission as a dictionary is to document words as they are actually used. If everyone used a word in a completely different way, we wouldn’t be able to give it a definition, right? Prescriptive vs. But to be added to the dictionary, a word must have a shared meaning (that is, it must communicate a widely agreed-upon meaning from one person to the next). Of course, many words have different shades of meaning for different people. Our lexicographers look for use not just by one person, but by a lot of people. And it’s useful for a general audience.Īll four of these points are important.It’s used by those people in largely the same way.It’s a word that’s used by a lot of people.In other words, our lexicographers add a word to the dictionary when they determine that: It’s actually the other way around: we add words to the dictionary because they’re real-because they’re really used by real people in the real world. The answer involves one of the most misunderstood things about dictionaries, so let’s set the record straight: a word doesn’t become a “real word” when it’s added to the dictionary. This is one of the most common questions we get-and it’s a great one. Why don’t you remove it from the dictionary? How does a word get into the dictionary?

□ How does a word get into the dictionary? Here are some of the most frequently asked, along with our honest answers. “Injecting” extra intelligence from lexicons or generating sentiment specific word embeddings are two prominent alternatives for increasing performance of word embedding features.□ Frequently asked questions about the dictionaryīut it’s the newly added words that generate the most attention-and questions. These two later domains are more sensitive to corpus size and training method, with Glove outperforming Word2vec. We further observe that influence of thematic relevance is stronger on movie and phone reviews, but weaker on tweets and lyrics.

Our empirical observations indicate that models trained with multithematic texts that are large and rich in vocabulary are the best in answering syntactic and semantic word analogy questions. We also explore specific training or post-processing methods that can be used to enhance the performance of word embeddings in certain tasks or domains. This work investigates the role of factors like training method, training corpus size and thematic relevance of texts in the performance of word embedding features on sentiment analysis of tweets, song lyrics, movie reviews and item reviews. In this paper, we also present two experiments demonstrating how to use the data sets in some NLP tasks, such as tweet sentiment analysis and tweet topic classification tasks. These ten embedding models were learned from about 400 million tweets and 7 billion words from the general text. The general data consist of news articles, Wikipedia data and other web data. In addition to the data sets learned from just tweet data, we also built embedding sets from the general data and the combination of tweets with the general data. In this paper, we present ten word embedding data sets. Therefore, it is necessary to have word embeddings learned specifically from tweets. Tweets are short, noisy and have unique lexical and semantic features that are different from other types of text. The embedding of a word captures both its syntactic and semantic aspects. They are usually generated from a large text corpus. Word embeddings have been used in many NLP tasks. A word embedding is a low-dimensional, dense and real-valued vector representation of a word.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed